- A core-satellite portfolio splits investments into stable core holdings and higher-risk satellite picks.

- The core is usually 60% of the portfolio, with satellites at 40%.

- It blends passive index investing with active opportunity bets.

DeepSeek just cut prices on the most powerful open-source AI model on the market. And it's the first one built to run on Huawei chips instead of Nvidia ones.

That changes the AI price war in two ways at once.

Through May 5, coders get 75% off DeepSeek V4-Pro. The cuts go deeper than that, too.

The full DeepSeek API lineup got cheaper. Cache-hit input pricing is now one-tenth of the old rate.

DeepSeek shared the news on X over the weekend. That's the same place it has rolled out most of its product news for the last year.

The math: A job that cost $1 to run on the old API can now cost as little as 10 cents on some inputs. That's a big break for coders running large workloads.

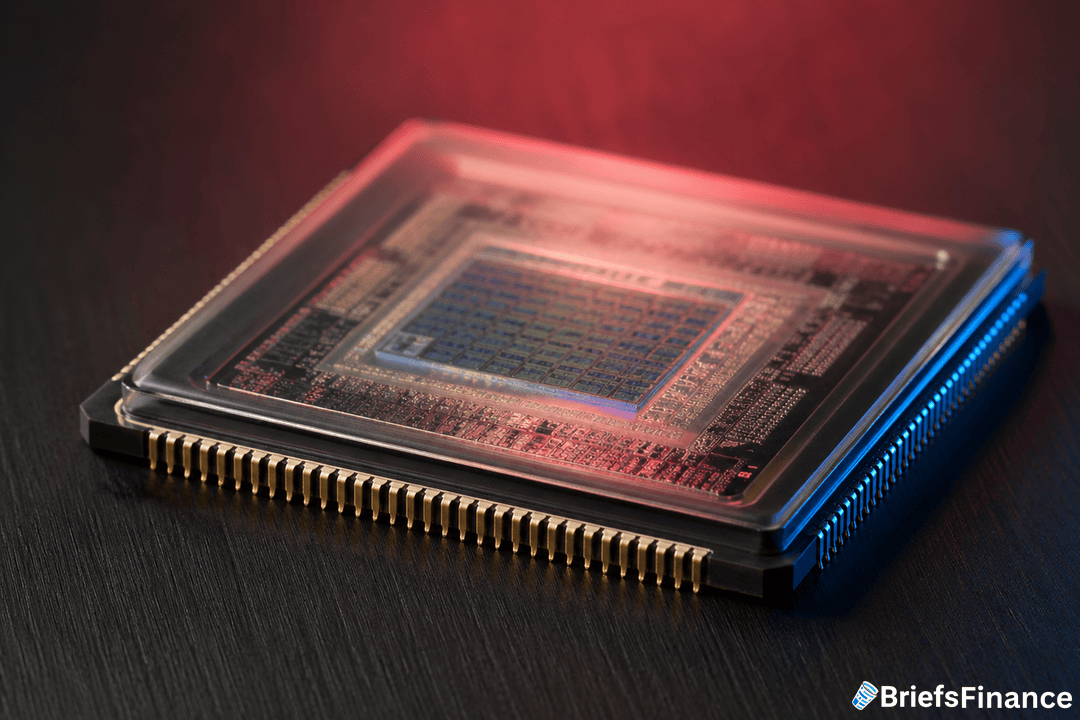

The chip swap is the bigger story. Friday's V4 launch is the first DeepSeek model built for Huawei silicon. The model can run on China's own AI stack with no Nvidia chips needed.

US export rules have been pushing China this way for years. Washington cut off Chinese firms from Nvidia's top chips. The bet was that less compute would slow Chinese AI down.

DeepSeek now has a working stack that doesn't need any of it. That's a problem for Nvidia, which counts on global demand for its top chips. It's also a problem for US labs that have priced their models against Nvidia compute.

If Huawei chips can run a model this close to Gemini, the moat starts to look thin.

DeepSeek split V4 into two tiers. The heavier Pro version is built for high-end work. The lighter Flash version is built for cheaper jobs.

The company says V4-Pro beats every other open-source model on world-knowledge tests. Only Google's closed-source Gemini-Pro-3.1 is still ahead.

Both tiers are built for AI agents. Those are the systems that handle multi-step jobs like booking travel or going through documents on their own.

Agents burn far more compute than chatbots. That's why a price cut on this tier matters more for coder budgets.

Nvidia's stock price assumes the world will keep buying its top chips for AI work. A working choice built on Huawei silicon undercuts that case.

Chinese cloud firms and coders were already moving to local chips. US export rules made that shift more or less forced.

DeepSeek's V4 shows what the end of that shift looks like. The price tag is aimed at pulling in global users, not just Chinese ones.

DeepSeek is using price the way Walmart used it for years. Pull buyers off rivals with a number they can't match.

With V4-Pro running on Huawei chips at 75% off, US AI labs face a model that's cheaper, free of Nvidia, and almost as good as Gemini.

The May 5 deadline on the deeper discount is the first thing to track. If DeepSeek extends it or makes the cuts permanent, that's a sign the firm is in real share-grab mode. If it lets the discount roll off, the move was likely meant to drive sign-ups for V4 users only.