A single ChatGPT query uses about 10 times the power of a Google search.

Now multiply that by hundreds of millions of users. The math gets ugly fast.

U.S. data centers are now on track to use 12% of all power in the country by 2030. Today, the share is just 4%, per a McKinsey study from 2024.

For investors trying to figure out where the AI boom lives, that gap is the answer.

The Demand Curve

U.S. data center power use is set to jump from 224 terawatt-hours in 2025 to 606 by 2030. That's per Visual Capitalist's read of the McKinsey data.

A terawatt-hour is one trillion watts of power used for an hour.

For scale, the U.K. used about half that much power in all of 2023.

AI alone could need 50 to 60 gigawatts of new power gear by 2030. McKinsey thinks the full buildout to support data center demand could cost $500 billion.

That covers new plants, grid upgrades, and water cooling.

Why The Numbers Keep Going Up

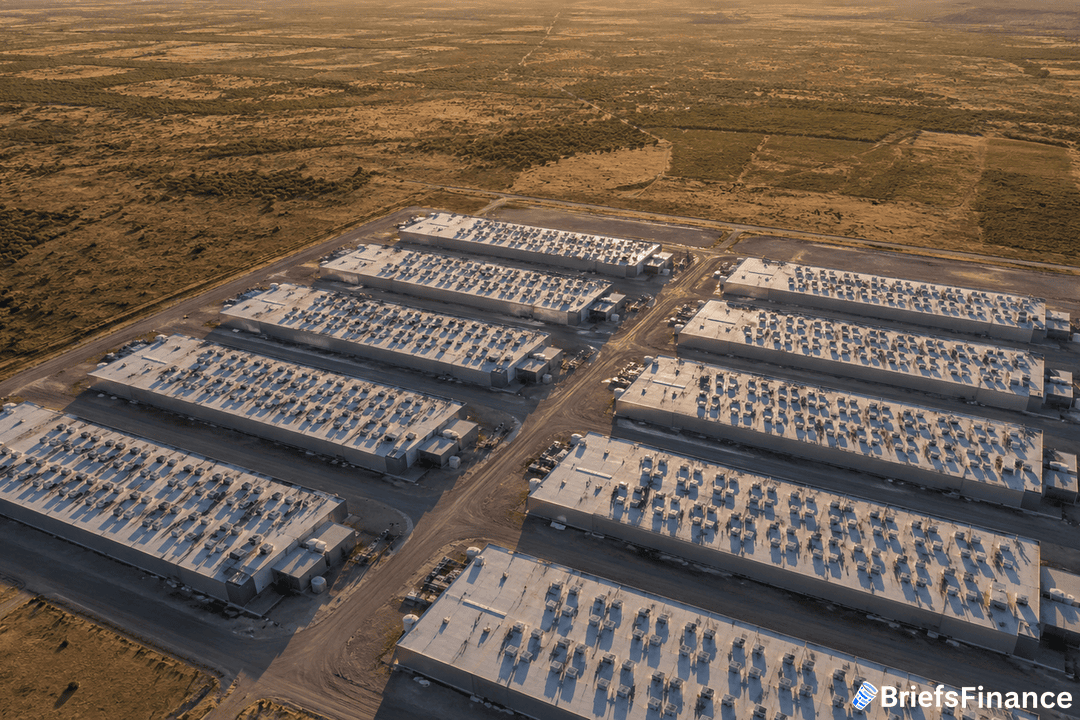

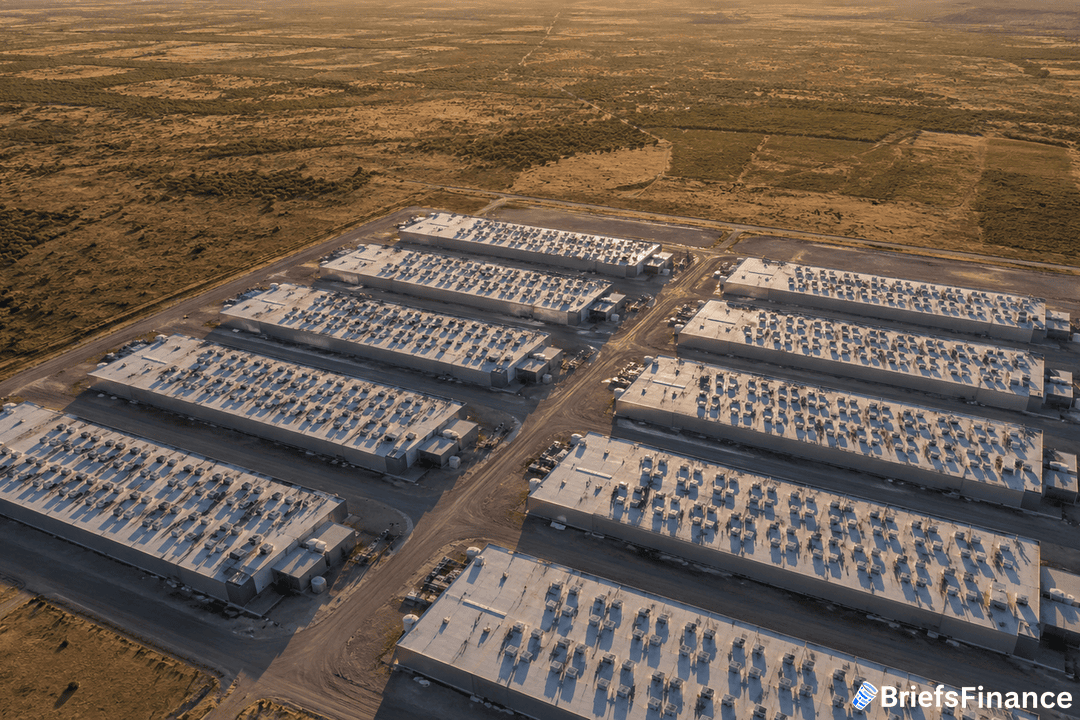

AI servers run hotter and longer than the older gear data centers were built for. Pew Research, citing the IEA, says one AI hyperscale site uses about as much power in a year as 100,000 homes.

The biggest sites being built will use 20 times that.

There were 5,426 data centers in the U.S. as of March 2025. In 2018, there were just 1,000.

That's a 5x jump in just seven years.

Where the centers sit matters too. In 2023, data centers used 26% of all power in Virginia.

They also used double-digit shares of supply in North Dakota, Nebraska, Iowa, and Oregon, per the IEA.

What The Sector Is Doing About It

The U.S. grid had been running on flat power demand since 2007. That era is over.

Data center growth alone could be 30% to 40% of all new U.S. power demand through 2030, per the McKinsey data.

So big tech is moving fast. Hyperscalers like Amazon, Microsoft, and Google are signing long-term power deals.

Some are with nuclear plants. Others are with gas turbine makers and fuel cell firms.

They're locking in the power they need before the grid catches up.

Why It Matters For Investors

The AI race is a power race. The chips and the models get the headlines.

But the real bottleneck is the wall socket.

For investors, that means the names making the picks and shovels - the power plants and the gear that runs them - have a story worth tracking.

The big buildout that's coming hasn't been priced into all the power names yet. That's the gap to watch.

Worth Noting

The IEA thinks worldwide data center power use will nearly double by 2030. The U.S. is the biggest piece of that growth.

The story isn't just about America. But this is where most of the cash will land.

Either way, the trend is clear. The next decade will look very different from the last one.

AI is reshaping power demand in a way the grid hasn't seen since the 1990s.