Broadcom has owned Google's custom AI silicon for more than a decade. That's ending.

Marvell stock jumped 6% Monday on a report that Google is in talks with Marvell to design two new AI chips. The first is a memory processing unit, which pairs with Google's Tensor Processing Unit. The second is a new TPU built for inference, the cheaper, high-volume work of running AI models after they've been trained.

Google plans to produce nearly 2 million of the memory chips. Designs should be final by next year.

Why Google Is Splitting The Contract

Broadcom charges Google a big per-unit markup on every TPU that ships. That's fine when volumes are small and the chips sit inside a small number of data centers. At the scale Google runs now, the markup adds up fast.

Bringing in a second vendor does two things. It lowers Google's negotiating floor with Broadcom. It also spreads the supply chain risk across more than one fab relationship.

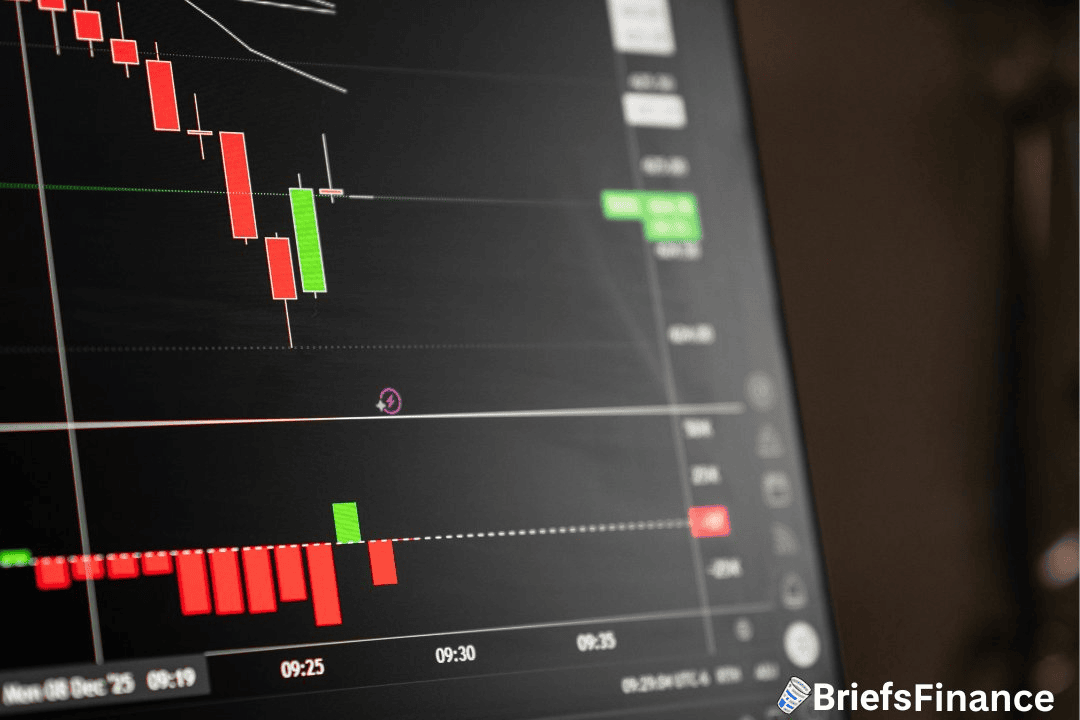

For Marvell, this is the second megawin of the month. Nvidia already named it a networking partner for its next data center platform. Marvell shares are up around 50% year to date, with 30% of that coming in April alone.

What It Means For Broadcom

Broadcom's AI chip business is the fastest-growing part of the company. Custom silicon for Google is a big chunk of that line. Losing exclusivity doesn't mean losing the customer, but it does mean smaller orders and thinner margins on what's left.

Think of it like being the only locksmith in town for 10 years. Then a second one opens across the street. You don't lose all your customers. But nobody pays you locksmith-emergency prices anymore.

Marvell's April Run

Marvell shares are up around 50% year to date, with 30% of that gain coming in April alone. The April run started when Nvidia named Marvell a networking partner for its next data center platform, which added a second hyperscaler feather to its cap.

Now Google is the second. That's two of the three biggest AI compute buyers on earth placing custom orders with Marvell inside a month.

The pattern sitting underneath is bigger than Marvell. Every hyperscaler that relies on one chip designer is now bringing in a second source, which is the exact playbook Nvidia's largest customers have been running for two years. The custom-silicon layer is now following.

Marvell is the obvious beneficiary because it already has the engineering team, the fab relationships, and the hyperscaler rolodex. Adding Google on top of Nvidia puts Marvell in the rare position of being the second source for two of the three biggest AI compute platforms at the same time.

That's the kind of structural tailwind that's hard to fake with one quarter's numbers. It shows up in the backlog and the year-ahead guide before it shows up on the headline.

Worth Noting

The bigger story is the pattern. Every hyperscaler that relies heavily on one chip designer is now looking for a second source. That's been true of Nvidia's biggest customers for two years. Now it's happening at the custom layer too.

Broadcom's moat is narrower than it was Monday morning.